Small Image CNN

author: Juma Shafara date: "2024-08-12" title: Convolutional Neural Network with Small Images keywords: [Training Two Parameter, Mini-Batch Gradient Decent, Training Two Parameter Mini-Batch Gradient Decent] description: In this lab, we will use a Convolutional Neral Networks to classify horizontal an vertical Lines

Objective for this Notebook 1. Learn how to use a Convolutional Neural Network to classify handwritten digits from the MNIST database

2. Learn hot to reshape the images to make them faster to process

1. Learn how to use a Convolutional Neural Network to classify handwritten digits from the MNIST database

2. Learn hot to reshape the images to make them faster to process

Table of Contents

In this lab, we will use a Convolutional Neural Network to classify handwritten digits from the MNIST database. We will reshape the images to make them faster to process

- Get Some Data

- Convolutional Neural Network

- Define Softmax, Criterion function, Optimizer and Train the Model

- Analyze Results

Estimated Time Needed: 25 min 14 min to train model

Preparation

# Import the libraries we need to use in this lab

# Using the following line code to install the torchvision library

# !mamba install -y torchvision

!pip install torchvision==0.9.1 torch==1.8.1

import torch

import torch.nn as nn

import torchvision.transforms as transforms

import torchvision.datasets as dsets

import matplotlib.pylab as plt

import numpy as np

Define the function plot_channels to plot out the kernel parameters of each channel

# Define the function for plotting the channels

def plot_channels(W):

n_out = W.shape[0]

n_in = W.shape[1]

w_min = W.min().item()

w_max = W.max().item()

fig, axes = plt.subplots(n_out, n_in)

fig.subplots_adjust(hspace=0.1)

out_index = 0

in_index = 0

#plot outputs as rows inputs as columns

for ax in axes.flat:

if in_index > n_in-1:

out_index = out_index + 1

in_index = 0

ax.imshow(W[out_index, in_index, :, :], vmin=w_min, vmax=w_max, cmap='seismic')

ax.set_yticklabels([])

ax.set_xticklabels([])

in_index = in_index + 1

plt.show()

Define the function plot_parameters to plot out the kernel parameters of each channel with Multiple outputs .

# Define the function for plotting the parameters

def plot_parameters(W, number_rows=1, name="", i=0):

W = W.data[:, i, :, :]

n_filters = W.shape[0]

w_min = W.min().item()

w_max = W.max().item()

fig, axes = plt.subplots(number_rows, n_filters // number_rows)

fig.subplots_adjust(hspace=0.4)

for i, ax in enumerate(axes.flat):

if i < n_filters:

# Set the label for the sub-plot.

ax.set_xlabel("kernel:{0}".format(i + 1))

# Plot the image.

ax.imshow(W[i, :], vmin=w_min, vmax=w_max, cmap='seismic')

ax.set_xticks([])

ax.set_yticks([])

plt.suptitle(name, fontsize=10)

plt.show()

Define the function plot_activation to plot out the activations of the Convolutional layers

# Define the function for plotting the activations

def plot_activations(A, number_rows=1, name="", i=0):

A = A[0, :, :, :].detach().numpy()

n_activations = A.shape[0]

A_min = A.min().item()

A_max = A.max().item()

fig, axes = plt.subplots(number_rows, n_activations // number_rows)

fig.subplots_adjust(hspace = 0.4)

for i, ax in enumerate(axes.flat):

if i < n_activations:

# Set the label for the sub-plot.

ax.set_xlabel("activation:{0}".format(i + 1))

# Plot the image.

ax.imshow(A[i, :], vmin=A_min, vmax=A_max, cmap='seismic')

ax.set_xticks([])

ax.set_yticks([])

plt.show()

Define the function show_data to plot out data samples as images.

Get the Data

we create a transform to resize the image and convert it to a tensor .

Load the training dataset by setting the parameters train to True. We use the transform defined above.

Load the testing dataset by setting the parameters train False.

We can see the data type is long.

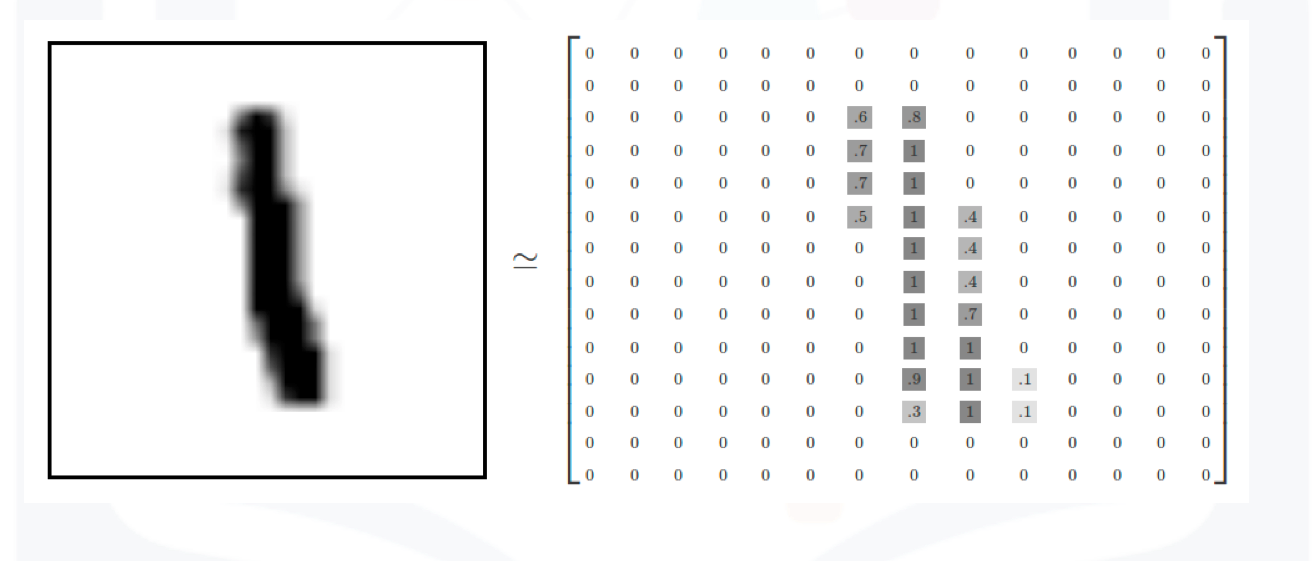

Each element in the rectangular tensor corresponds to a number representing a pixel intensity as demonstrated by the following image.

Print out the fourth label

Plot the fourth sample

The fourth sample is a "1".

Build a Convolutional Neural Network Class

Build a Convolutional Network class with two Convolutional layers and one fully connected layer. Pre-determine the size of the final output matrix. The parameters in the constructor are the number of output channels for the first and second layer.

class CNN(nn.Module):

# Contructor

def __init__(self, out_1=16, out_2=32):

super(CNN, self).__init__()

self.cnn1 = nn.Conv2d(in_channels=1, out_channels=out_1, kernel_size=5, padding=2)

self.maxpool1=nn.MaxPool2d(kernel_size=2)

self.cnn2 = nn.Conv2d(in_channels=out_1, out_channels=out_2, kernel_size=5, stride=1, padding=2)

self.maxpool2=nn.MaxPool2d(kernel_size=2)

self.fc1 = nn.Linear(out_2 * 4 * 4, 10)

# Prediction

def forward(self, x):

x = self.cnn1(x)

x = torch.relu(x)

x = self.maxpool1(x)

x = self.cnn2(x)

x = torch.relu(x)

x = self.maxpool2(x)

x = x.view(x.size(0), -1)

x = self.fc1(x)

return x

# Outputs in each steps

def activations(self, x):

#outputs activation this is not necessary

z1 = self.cnn1(x)

a1 = torch.relu(z1)

out = self.maxpool1(a1)

z2 = self.cnn2(out)

a2 = torch.relu(z2)

out1 = self.maxpool2(a2)

out = out.view(out.size(0),-1)

return z1, a1, z2, a2, out1,out

Define the Convolutional Neural Network Classifier, Criterion function, Optimizer and Train the Model

There are 16 output channels for the first layer, and 32 output channels for the second layer

Plot the model parameters for the kernels before training the kernels. The kernels are initialized randomly.

Define the loss function, the optimizer and the dataset loader

Train the model and determine validation accuracy technically test accuracy (This may take a long time)

# Train the model

n_epochs=3

cost_list=[]

accuracy_list=[]

N_test=len(validation_dataset)

COST=0

def train_model(n_epochs):

for epoch in range(n_epochs):

COST=0

for x, y in train_loader:

optimizer.zero_grad()

z = model(x)

loss = criterion(z, y)

loss.backward()

optimizer.step()

COST+=loss.data

cost_list.append(COST)

correct=0

#perform a prediction on the validation data

for x_test, y_test in validation_loader:

z = model(x_test)

_, yhat = torch.max(z.data, 1)

correct += (yhat == y_test).sum().item()

accuracy = correct / N_test

accuracy_list.append(accuracy)

train_model(n_epochs)

Analyze Results

Plot the loss and accuracy on the validation data:

# Plot the loss and accuracy

fig, ax1 = plt.subplots()

color = 'tab:red'

ax1.plot(cost_list, color=color)

ax1.set_xlabel('epoch', color=color)

ax1.set_ylabel('Cost', color=color)

ax1.tick_params(axis='y', color=color)

ax2 = ax1.twinx()

color = 'tab:blue'

ax2.set_ylabel('accuracy', color=color)

ax2.set_xlabel('epoch', color=color)

ax2.plot( accuracy_list, color=color)

ax2.tick_params(axis='y', color=color)

fig.tight_layout()

View the results of the parameters for the Convolutional layers

Consider the following sample

Determine the activations

Plot out the first set of activations

The image below is the result after applying the relu activation function

The image below is the result of the activation map after the second output layer.

The image below is the result of the activation map after applying the second relu

We can see the result for the third sample

Plot the first five mis-classified samples: